Responsible Use of AI in Medical Education

Module 3.

Purpose and Topics

Purpose

This module prepares medical educators to use AI responsibly, ethically and effectively across academic, clinical and scholarly contexts. As AI becomes increasingly integrated into health professions education, faculty require a nuanced understanding of their professional responsibilities when using these tools.

The goal is not simply to learn how to use AI, but to develop the judgment necessary to integrate it safely into educational and student clinical decision learning environments.

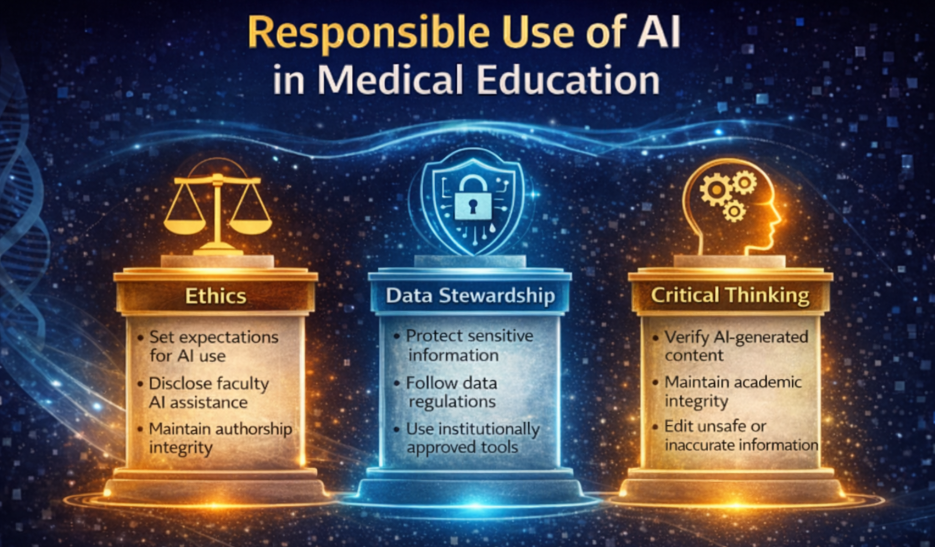

This module outlines three core domains of responsible AI use. More detailed guidance is available through institutional policies and professional organizations, some of which are included in the supplementary materials.

Topics/Learning Objectives

Upon completion of this module, individuals will be able to:

Define responsible, ethical and professional AI use in the context of medical education and emerging institutional standards.

- Describe the core domains of responsible AI use, including ethics and academic integrity, data stewardship and critical thinking.

- Model responsible AI practices for learners and contribute to a culture of transparency and professionalism.

- Apply critical thinking when reviewing AI-generated output and when using AI to develop instructional materials, assessments, case scenarios or scholarly work.

What Is Responsible AI Use in Medical Education?

Responsible AI use refers to the thoughtful, ethical and professionally grounded integration of AI tools into teaching, assessment, scholarship and academic decision-making.

It involves using AI in ways that:

Align with institutional policies and professional standards

Maintain academic integrity and honesty

Respect ethical, professional and regulatory obligations

Promote responsible stewardship of data

Support educational goals without replacing human expertise

Responsible use emphasizes judgment rather than technical expertise. Faculty do not need to be AI specialists, but they must make sound, professional decisions about when, how and why AI is used.

Part 1

Ethical & Transparent Use

AI use must reflect core academic values: honesty, professionalism, fairness, accountability and respect for patient and learner privacy.

Best Practices

1. Stay Aligned with Institutional Guidelines for AI Use

AI use should reflect core academic values, including honesty, professionalism, fairness, accountability and respect for patient and learner privacy.

Many medical schools, clinics and departments provide AI guidance, which may include:

Approved tools across public, enterprise and research environments

Data governance and security protocols

Guidance for teaching, learning and scholarship

Expectations for citation and disclosure of AI use

When uncertainty exists, faculty should consult IT, compliance or data governance offices.

2. Establish and Role Model Academic AI Integrity

Faculty should maintain transparency about how AI contributes to their work. This includes:

Acknowledging when AI has generated or significantly shaped content

Explaining how AI tools were used in workflows

Identifying where faculty judgment informed the final output

Teaching learners how to appropriately disclose their own AI use

Why this matters: Transparency builds trust, reinforces professionalism and models responsible behavior for learners.

Examples of inappropriate use include:

Assigning AI tools that are not equitably accessible

Allowing AI to generate fabricated references

Presenting AI-generated content as original expert work

Using AI for scholarly work without meaningful review or editing

Failing to disclose AI assistance when it influences academic output

3. Establish Written Expectations and Guidelines for Learners

Aside from institutional policies that align with faculty policies, faculty should clarify to learners:

What types of AI use are permitted in an assignment or research project

What types are prohibited (e.g., answering exam questions)

How students must disclose their AI use

How AI misuse will be handled

Clear expectations reduce misunderstandings and academic misconduct. Responsible use of AI can be demonstrated through simple practices within teaching environments.

Examples include:

Explaining to learners when AI tools were used to help generate teaching materials

Setting course-level expectations for acceptable AI use

Reviewing AI-assisted student work for originality and integrity concerns

Encouraging learners to critically evaluate AI-generated content

In Conclusion

AI policies vary widely across institutions, and they evolve quickly. Best practices include:

Staying updated on institutional AI guidance and approved tools

Consulting IT/compliance before using AI with institutional, learner or patient-related information

Disclosing when AI meaningfully contributes to instructional or scholarly content

Setting clear expectations for learner use of AI in courses or programs

Part 2.

Data Stewardship and Security

Faculty must also consider how AI tools handle data. Many publicly available AI tools process user inputs outside institutional systems, meaning information entered into these tools may not remain private.

For this reason, it is important to protect sensitive educational, research and clinical data.

Best Practice

1. Follow Institutional Policies Regarding Data Stewardship

Faculty should ensure their use of AI aligns with institutional data security guidelines. This requires not only familiarity with relevant policies, but also an understanding of the rationale behind them. Key responsibilities include:

Handling sensitive data appropriately, in compliance with regulations such as FERPA, HIPAA and applicable research data protection standards.

Understanding institutional data protections, including those embedded within enterprise-level AI systems.

Distinguishing between personally identifiable information and publicly accessible data.

Clearly disclosing how AI-generated outputs (e.g., meeting notes) will be reviewed, stored and used.

Adhering to Institutional Review Board requirements when applicable.

Following guidance from institutional policies and professional organizations regarding AI use in education and healthcare.

More information can be accessed at the AI Governance section.

What Is Personally Identifiable Information?

Personally Identifiable Information (PII) refers to any information that can directly or indirectly identify an individual.

In medical education and healthcare environments, PII often overlaps with Protected Health Information.

Examples include:

Names

Dates of birth

Medical record numbers

Contact information

Student ID numbers

Clinical case details that could identify a patient

Uploading identifiable information into non-approved AI tools may violate institutional policies or legal protections such as FERPA, HIPAA or research data regulations.

Faculty should assume that data entered into public AI tools may not remain private.

Faculty Practice Examples

Examples of responsible data practices include:

Avoiding the upload of real patient or student data into public AI tools

Choosing enterprise AI tools when working with institutional or research data

Removing identifying information from clinical cases before using AI to generate educational materials

Distinguish between public AI tools and institutionally approved or enterprise systems

Apply data minimization and de-identification principles

Follow institutional and regulatory data protection requirements

Part 3.

Applying Critical Thinking and Discernment in Evaluating AI Output

AI can be a valuable tool in supporting the development of educational and research materials; however, its use requires careful discernment and the ability to critically evaluate AI-generated outputs. This topic will be addressed more comprehensively in Module 6. In general, medical educators learn to critically review AI-generated output, such as…

Whether the information is accurate

Whether it reflects current clinical and educational standards

Whether it introduces bias or misinformation

Whether it aligns with program goals and the intended learner level

AI-generated material should always be reviewed thoroughly before use in educational settings. Faculty are responsible for ensuring that content is accurate, safe and appropriate for learners. Because generative AI systems produce responses based on patterns in language rather than verified knowledge, outputs may at times be incomplete, unrealistic or potentially unsafe.

Accordingly, faculty should:

Verify the accuracy and appropriateness of AI-generated content

Edit AI-generated case materials to remove unrealistic or unsafe clinical elements

Ensure that teaching materials align with current medical standards and guidelines

Review any AI-generated references or sources for accuracy and validity

AI can effectively support educational preparation, but it should not replace faculty judgment or professional expertise.

Smart Use Tips

The following strategies can help faculty use AI tools effectively and responsibly:

Verify AI-generated academic or clinical content before use

Design AI educational activities to support reasoning, not replace it

Stay alert to confident language that lacks evidence

Ask AI to provide sources and verify them independently

Consider whether AI-generated material is appropriate for the educational context

Responsible use of AI combines ethical practice, policy awareness and professional judgment.

Supplementary Materials & Resources

Supplementary Materials

PowerPoint

Flashcards

Google NotebookLM or GEM

Resources

Articles

Gin BC et al. Entrustment and EPAs for Artificial Intelligence in Health Professions Education. Academic Medicine. 2025.

Komasawa, N., & Yokohira, M. Generative artificial intelligence (AI) in medical education: a narrative review of the challenges and possibilities for future professionalism. Cureus, 17(6), e86316. 2025.

Masters K. Ethical use of artificial intelligence in health professions education: AMEE Guide No. 158. Medical Teacher. 2023.

Videos

Websites

Note: The text and graphics in these modules were co-developed with the assistance of generative AI tools such as OpenAI’s ChatGPT, Google’s Gemini and NotebookLM and Microsoft’s CoPilot, drawing on the indicated reference materials. The materials were then edited for relevance and accuracy.