Generative AI and Large Language Models

Module 2.

Purpose and Topics

Purpose

To introduce the mechanisms, strengths and limitations of Large Language Models (LLMs).

Topics/Learning Objectives

Upon completion of this module, individuals will be able to:

Define Generative AI and Large Language Models

Describe How LLMs Are Trained

Identify and Describe Risks Such as Bias, Hallucinations and Other Factors

Utilize Quick Tips to Evaluate AI Output

Topic 1

Define Generative AI and Large Language Models

What is Artificial Intelligence?

In Module 1, we defined generative AI (GenAI) as a subset of AI capable of producing new content, such as text, images or computer code. Tools like OpenAI's ChatGPT, Adobe’s Firefly and others are built on machine learning and deep learning models trained on large amounts of data of multiple types.

A Large Language Model (LLM) is a subset of generative artificial intelligence trained to recognize, generate and interpret language. You’ve probably interacted with one—like ChatGPT or Claude. They are designed to simulate human conversation, answer questions, generate summaries or help brainstorm ideas.

Four Key Stages of LLM Training

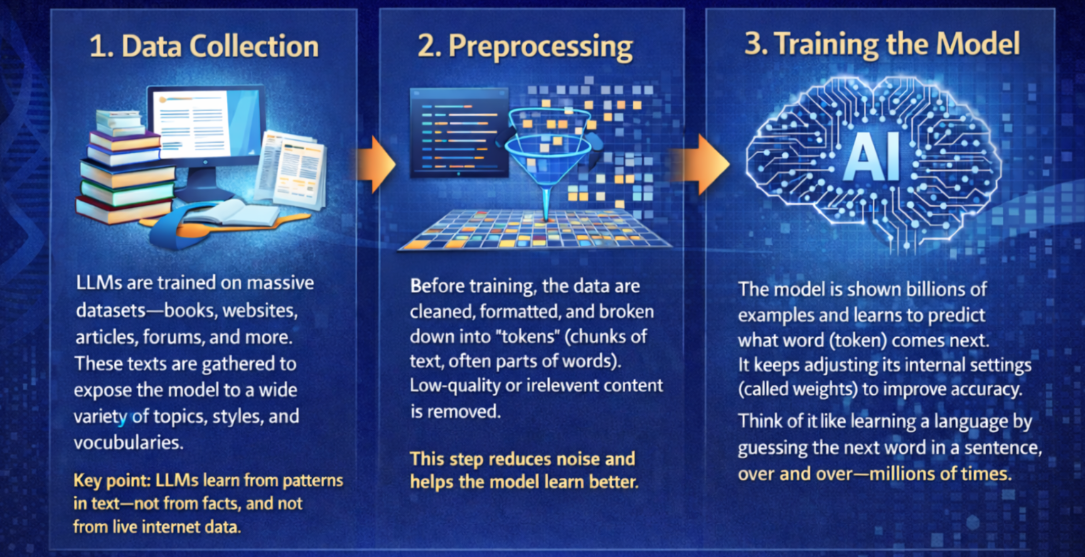

1. Data Collection

- LLMs are trained on massive datasets—books, websites, articles, forums and more, sometimes called a data corpus. These texts are gathered to expose the model to a wide variety of topics, styles and vocabularies.

Key point: Most LLMs learn from patterns in text—from their data corpus, and many do not necessarily access the internet.

2. Preprocessing

- Before training, the data are cleaned, formatted and broken down into “tokens” (chunks of text, often parts of words). Low-quality or irrelevant content is removed.

This step reduces noise and improves the model's learning.

3. Training the Model

- The model is shown billions of examples and learns to predict what word (token) comes next. It keeps adjusting its internal settings (called weights) to improve accuracy.

Think of it like learning a language by guessing the next word in a sentence, over and over, millions of times.

4. Human Feedback (for some models)

- Some models, like ChatGPT, also use human reviewers to rank outputs. This helps fine-tune the model’s tone, ethics and usefulness.

This is part of a process called Reinforcement Learning from Human Feedback.

What LLMs Do and Don’t Do Well

✔️ What They Do Well

- Generate coherent, logical text

- Help explain concepts or brainstorm

- Identify common patterns

❌ What They Don’t Always Do Well

- Access up-to-date facts (unless connected to live tools)

- Understand meaning like a person

- Guarantee accuracy or cite original sources

Some common risks when using AI are the following:

Bias - AI models may incorporate "biased artifacts" from historical medical data, potentially perpetuating diagnostic inequities.

Hallucinations or Confabulation - The generation of false, fabricated or misleading content by an AI model that often appears highly convincing, fluent and plausible.

Black Box – A characteristic of many AI systems in which both the internal reasoning processes and the conditions of model development (such as training data, weighting and design choices) are not transparent, limiting the user’s ability to understand, evaluate or fully trust how outputs are generated.

An AI system recommends a specific treatment plan for sepsis. While it may provide a rationale in natural language, the clinician cannot determine:

how the model prioritized certain clinical variables over others

whether the training data included representative patient populations

if embedded biases influenced the recommendation

As a result, both the decision pathway and the foundational basis of the model’s knowledge remain partially opaque.

There are other risks to keep in mind that are focused on the person using AI rather than the AI itself, such as:

Automation Bias/Complacency - A cognitive shortcut where a person favors suggestions from an automated system over their own reasoning or contradictory evidence.

Cognitive Offloading - The externalisation of cognitive processes, often involving tools or external agents, such as notes, calculators or digital tools like AI, to reduce cognitive load.

These can be especially challenging because an AI system's output can appear, on the surface, to be accurate, high-quality and thus trustworthy. It’s important for the individual to be “in the loop” of the process and use AI as a helpful tool, not let it do all of the thinking.

Topic 4

Quick Tips to Evaluate AI Output

We’ll cover this in more detail in Module 6, but it’s important enough that you start considering these ideas now. Part of our responsibility when working with AI is to be vigilant in ensuring the information we use is accurate and trustworthy. To do that, we should:

Maintain a healthy sense of skepticism. It’s easy to start trusting AI, but it’s prudent to always question and ensure you’re using AI to help you and not overly rely on it.

Trust but verify. Especially double-check important information, such as medical facts, with trusted sources.

Focus on using AI tools as helpers rather than as systems that can do the work for you. Use LLMs to explain, not replace, your own reasoning.

Stay alert to confidence without accuracy. Maintain a critical mindset—question outputs, verify accuracy and don’t assume confidence equals correctness.

Practice asking your AI questions about the validity and accuracy of the output.

Ask when it was last trained.

Check whether it has live data access.

Ask your AI assistant to cite sources, and then check the linked sources, especially in clinical or research contexts.

Supplementary Materials & Resources

Supplementary Materials

- PowerPoint

- Flashcards

- Google NotebookLM or GEM

Resources

Articles

Abdulnour, R. E. E., Gin, B., & Boscardin, C. K. (2025). Educational Strategies for Clinical Supervision of Artificial Intelligence Use. New England Journal of Medicine, 393(8), 786-797.

Izquierdo-Condoy, J. S., Arias-Intriago, M., Tello-De-la-Torre, A., et al. (2025). Generative artificial intelligence in medical education: Enhancing critical thinking or undermining cognitive autonomy? Journal of Medical Internet Research, 27, e76340

Videos

Websites

- The 4 Stages of Training an LLM from Scratch (Explained Clearly) (2025)

- How Large Language Models Work: From Zero to ChatGPT (2023)

Note: The text and graphics in these modules were co-developed with the assistance of generative AI tools such as OpenAI’s ChatGPT, Google’s Gemini and NotebookLM and Microsoft’s CoPilot, drawing on the indicated reference materials. The materials were then edited for relevance and accuracy.